If you are taking a course in algorithms, you have probably encountered the Master Theorem. It is a tool that helps you compute the time complexity of a divide-and-conquer algorithm of the form :

More formally, if the running time of the algorithm is denoted by \(T(n)\), then the Master Theorem considers recurrences of the form: \[T(n) = \color{red}{a}\color{black}T(n/\color{blue}{b}\color{black}) + \color{green}f(n)\] where \(\color{red}a\) and \(\color{blue}{b}\) are constants, and \(\color{green}f\) represents the running time of combining the solutions of the subproblems (step 3 above).

You probably also remember the Master Theorem as a set of 3 rules that you need to either memorize or look up every time you need to use it. In this post, I will try to give you an intuition behind the Master Theorem. The intuition is not my own, I got it from Prof. Kapralov’s lectures on algorithms.

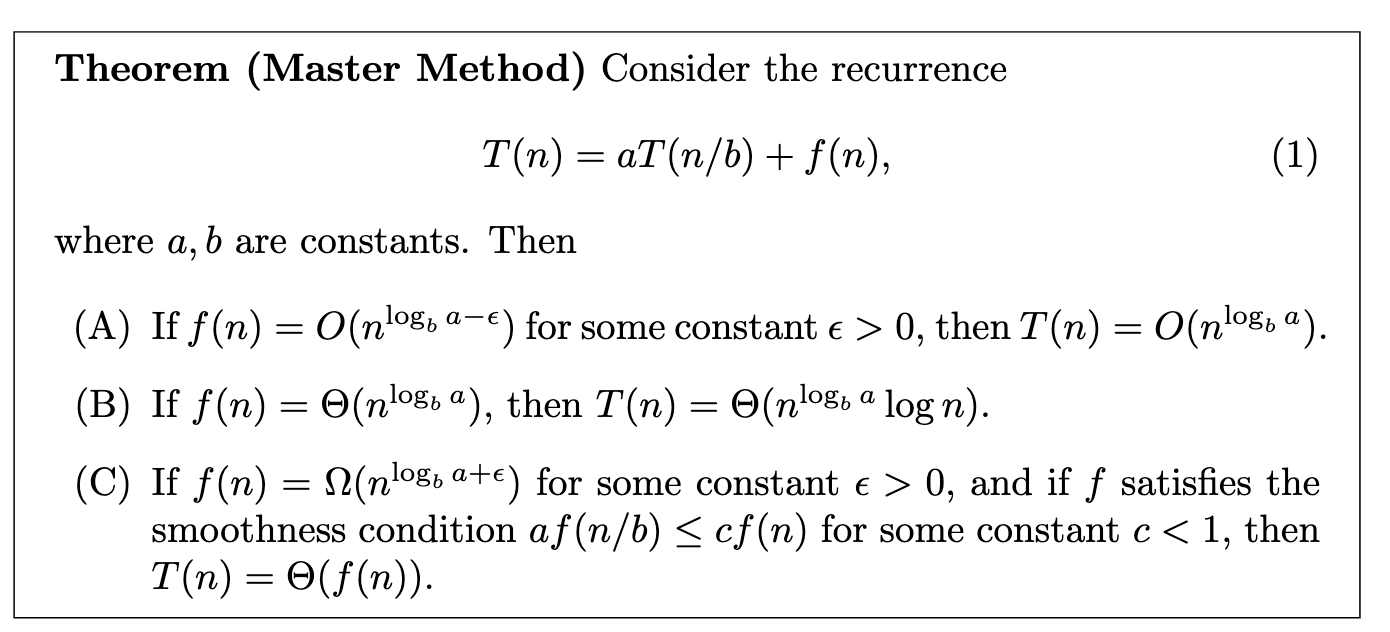

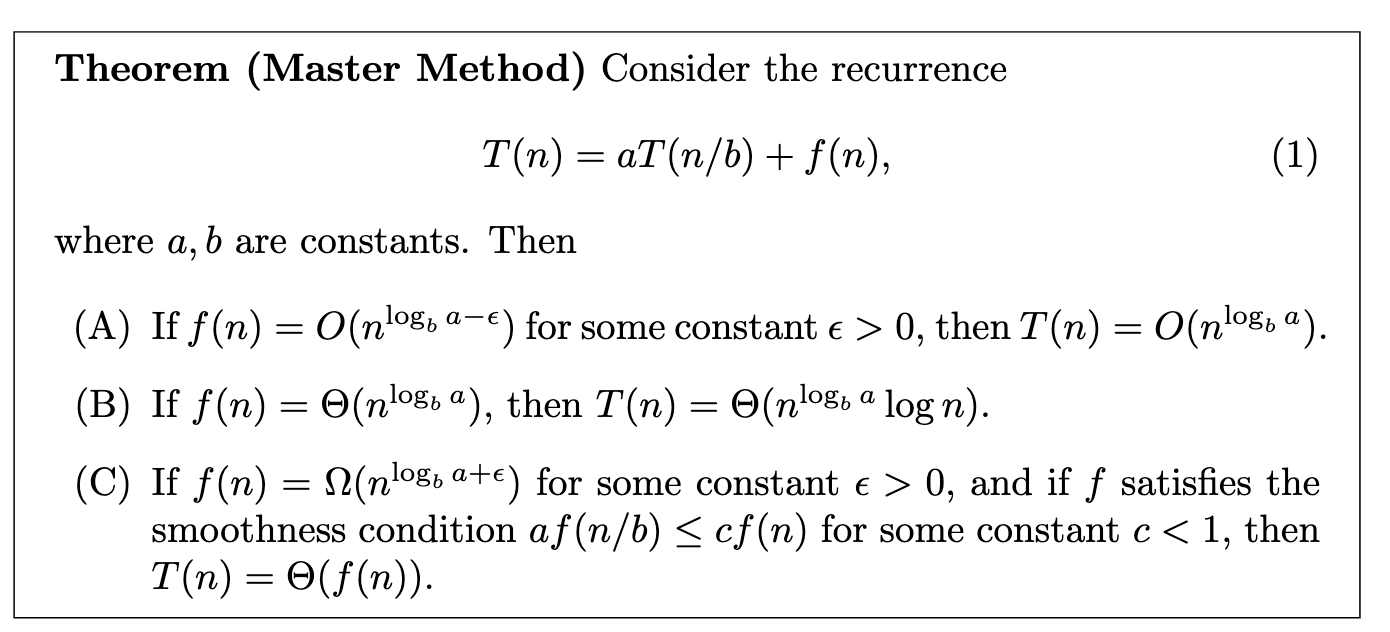

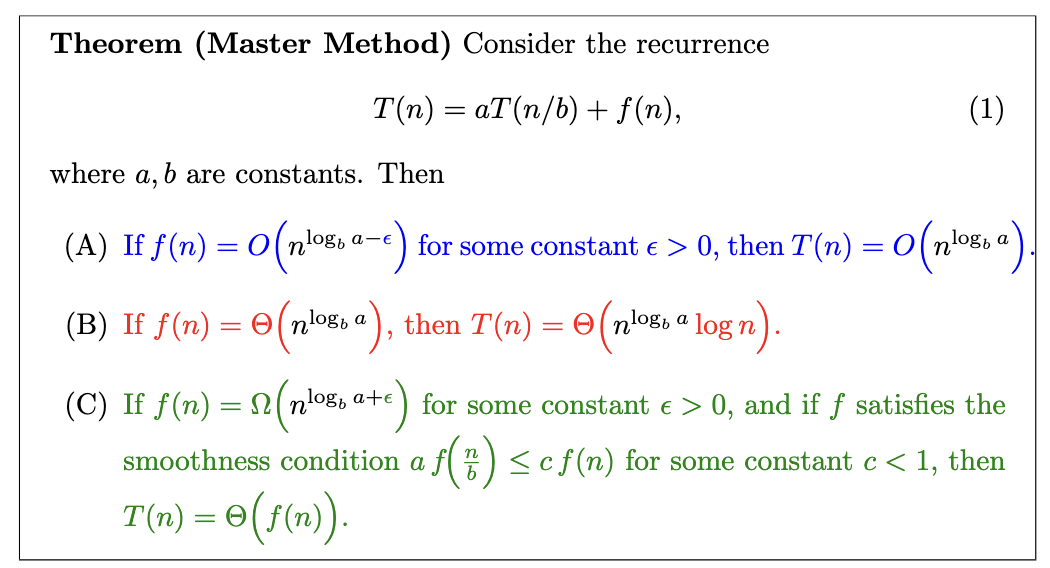

This statement can be a bit intimidating at first. But let’s break it down.

We have 3 cases, each corresponding to a different relationship between \(f(n)\) and \(n^{\log_b a}\).

There’s a quantity that comes up in each case: \(n^{\log_b a}\).

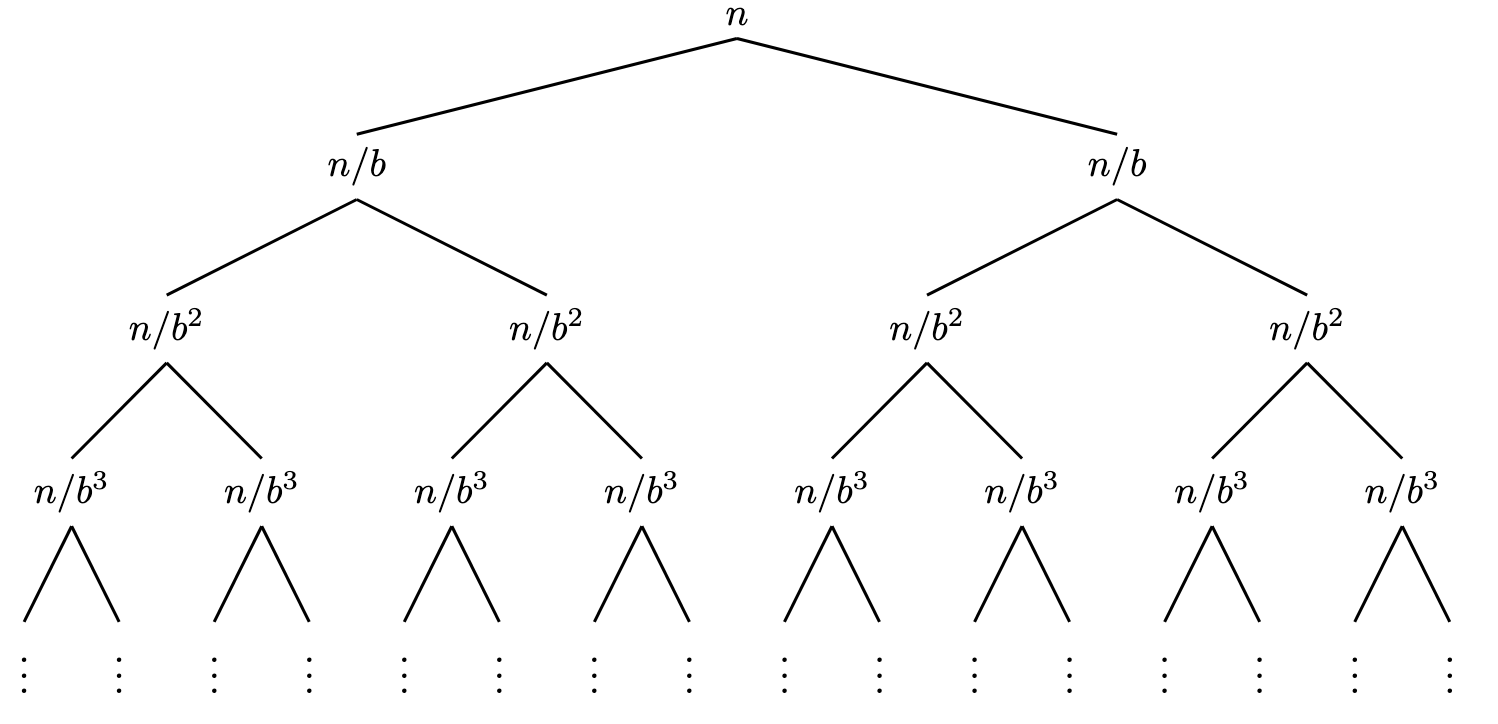

It is critical to understand what the quantity \(n^{\log_b a}\) represents. To understand it, let’s start by computing the number of leaf node in the computational tree. Each node in this tree represents a subproblem (a function call). This tree is represented in the figure below where \(a=2\).

The height of this tree is \(\log_b(n)\) and each node has \(a\) children. So the number of leaves is \(a^{\log_b(n)}\). Now if we massage this expression a bit, we get : \[ \color{red}a\color{black}^{\log_b(n)} = \left( \color{red} b^{\log_b a} \color{black} \right)^{\log_b(n)} = \left( b^{\log_b(n)} \right)^{\log_b a} = n^{\log_b a}\] where the first equality comes from the identity \(a = b^{\log_b a}\) and the second from swapping the exponents. So \(n^{\log_b a}\) is nothing but the number of leaves in the computational tree !

Let’s observe that the first function call (the root of the tree) takes \(f(n)\) time (\(f(n)\) time for combining the results and the rest is done by the other recursive calls). The very last function calls (leaves) do just a constant amount of work, so their total time would be \(\text{\#leaves} \times \text{constant}\) i.e \(\Theta(n^{\log_b a})\). So :

|

work is concentrated at the leaves \(f(n)\) is \(O(n^{\log_b a - \epsilon})\), so the work done at the root is “smaller”1 than the work done at the leafs. total runtime is the total runtime of the leaves \(T(n) = \Theta(n^{\log_b a})\) |

|

work at each level of the tree is the same work at root = work at leaf \(f(n) = \Theta(n^{\log_b a})\) \(\textbf{work per level} \times \textbf{height}\) \(n^{\log_b a} \times \log_b(n)\) |

|

work is concentrated at the root \(f(n) = \Omega(n^{\log_b a + \epsilon})\), so it’s “greater”\(^1\) than the leaves’ total runtime. total runtime is the total runtime of the root \(T(n) = \Theta(f(n))\) |

Basically, each case of the master theorem is a comparison between the work done at the root and the work done at the leaves. The three cases correspond to when the leaves dominate, when both are equal, and when the root dominates. And the total runtime is the total runtime of the dominating part (or \(\text{height}\times \text{work per level}\) when they are equal).

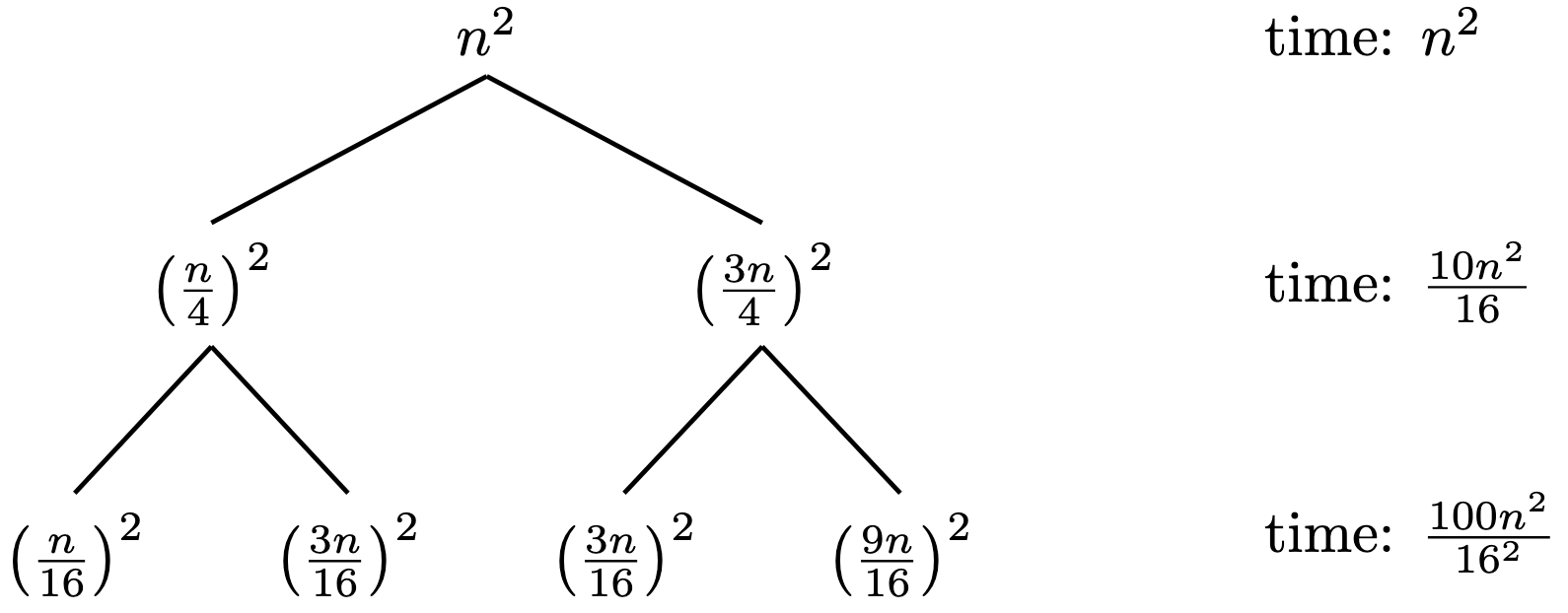

Let’s consider the following recurrence : \[T(n) = T(n/4) + T(3n/4) + n^2\] This recurrence does not fit the form of the Master Theorem. However, we can still use the intuition we developed to have a good guess of the time complexity, which we confirm by a proof by induction.

The root of the tree takes \(n^2\) time. Its children take \((\frac{3n}{4})^2\) and \((\frac{n}{4})^2\) each. So the second level of the tree takes \(\frac{10n^2}{16}\) time. We can also check the third level. What we find is that the work done at each level is decreasing. Meaning that intuitively the work will concentrate at the root. So a good guess would be that \(T(n) = O(f(n)) = O(n^2)\).

Let’s prove this by induction. The base case is trivial. For the induction step, we assume that \(T(k) \leq c k^2\) for all \(k < n\). Then we have : \[T(n) = T(n/4) + T(3n/4) + n^2 \leq c \left( \frac{n}{4} \right)^2 + c \left( \frac{3n}{4} \right)^2 + n^2 = (1+\frac{9c}{16}) n^2 \leq c n^2\]

if we choose \(c \geq 16/7\). Which ends the proof and confirms our guess.

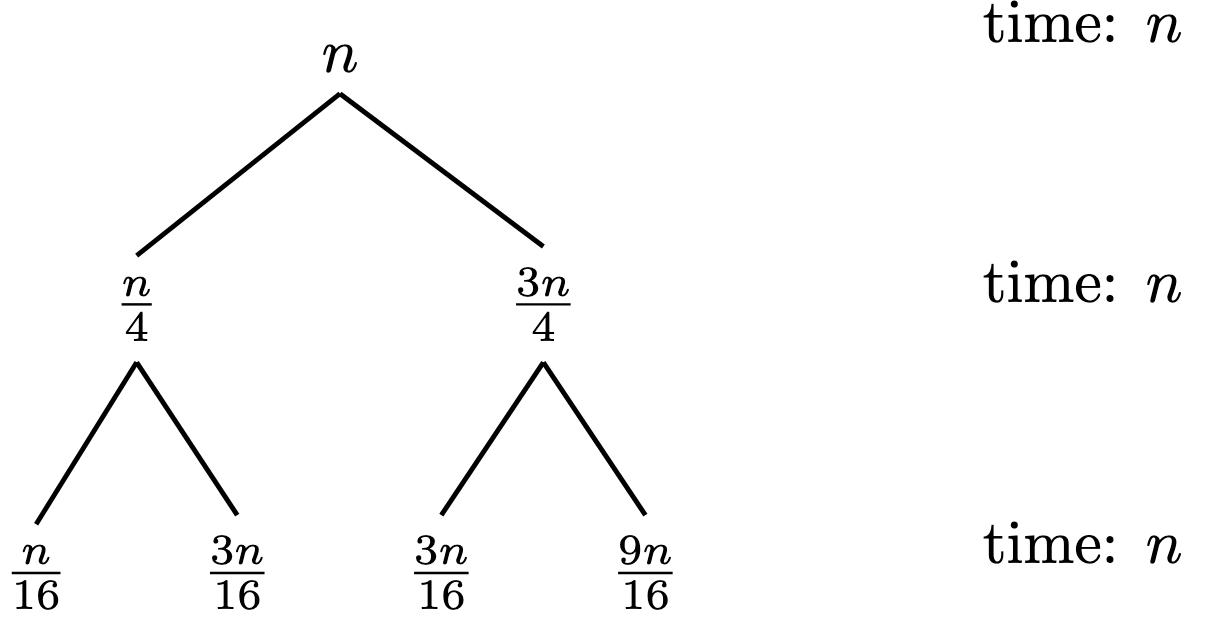

Now let’s change \(f(n)\) to \(n\). We get the recurrence : \[T(n) = T(n/4) + T(3n/4) + n\] This time, the root takes \(n\) time, and its children take \(\frac{3n}{4}\) and \(\frac{n}{4}\) each. So the second level of the tree takes \(n\) time. We can check that it will be the same for next levels. So the work is concentrated at the leaves. A good guess would be that \(T(n) = O(n \log n)\). Which we also can prove by induction.

“smaller” or “greater” here are the asymptotic sense.↩︎